Over the past few weeks, we've participated in various IT forums and developer conferences. In these settings, the debate surrounding artificial intelligence (AI) in software development has intensified. Its impact on productivity is undeniable. In general, everyone reports productivity gains.

What was once seen as an undeniable advance is now being analyzed with more nuance. The question that lingers is unsettling: are we losing capabilities as developers in an era where AI makes our work so much easier ?

Increasingly, articles and experts are proposing this hypothesis, comparing it to the so-called reverse Flynn effect . Traditionally, the Flynn effect describes how average IQ has increased generation after generation, attributed to improved educational and cognitive conditions. However, some recent studies suggest that in certain areas we may be seeing the opposite trend: reliance on smart tools may be limiting our capacity for critical thinking and problem-solving.

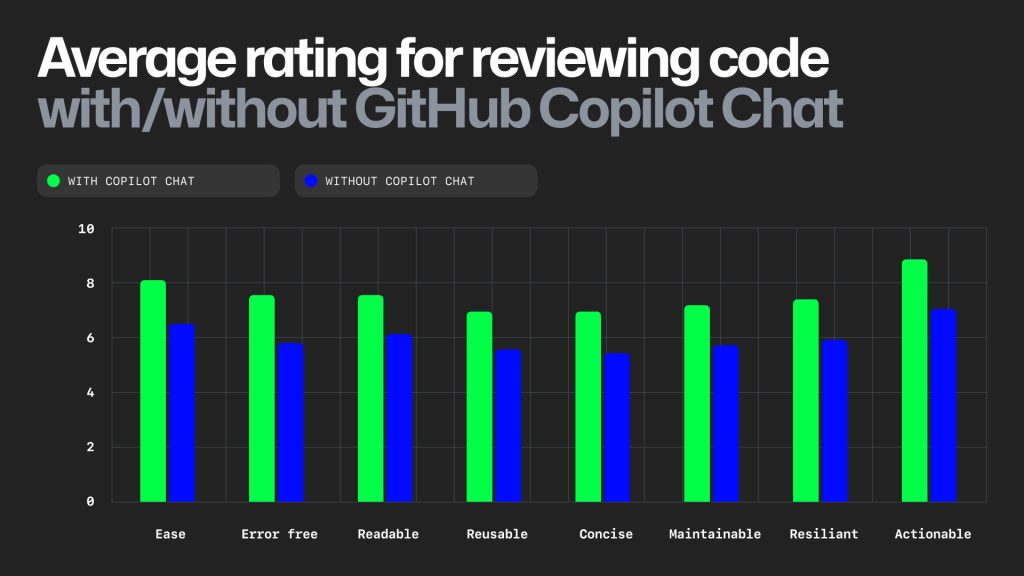

In software development, this translates into a clear phenomenon. Generative AI tools have proven to be an efficient solution for accelerating code writing, but their quality isn't always optimal . In fact, several studies show that AI-generated code must be reviewed with particular care . Automation speeds up production, but it often leaves maintenance and debugging tasks that, in some cases, can negate the initial speed gains. However, this reconceptualization of programming work is clearly a paradigm shift. People want to learn to program with programming co-pilots .

This scenario presents an interesting paradox: the time saved in development is transferred to a greater effort in maintenance. Does this equation make sense? The answer depends on the value assigned to each phase of the process. If AI generates code quickly, but then significant time is needed to review, debug, and improve it, then the net gain in productivity is not so clear.

That's why it's important to avoid trivializing these advances and delve deeper into their real impact. It's not about rejecting AI, but about understanding its role: they are co-pilots , not pilots . A co-pilot can help a pilot make faster and safer decisions, but should never completely replace their judgment and experience. The same applies to software development. AI cannot replace human judgment, the ability to reason about a system's architecture, or the intuition gained through experience.

The future of development isn't about choosing between AI and developers, but about finding the right balance between the two. Knowing when to trust AI and when to intervene manually will be the difference between high-quality code and code that merely appears functional. Artificial intelligence is a powerful tool, but it still relies on human intelligence to reach its full potential.